‘Atlas of AI’ by Kate Crawford: a review

Large-scale AI systems consume enormous amounts of energy. Yet the material details of those costs remain vague in the social imagination.

16 AUGUST 2023 · 09:34 CET

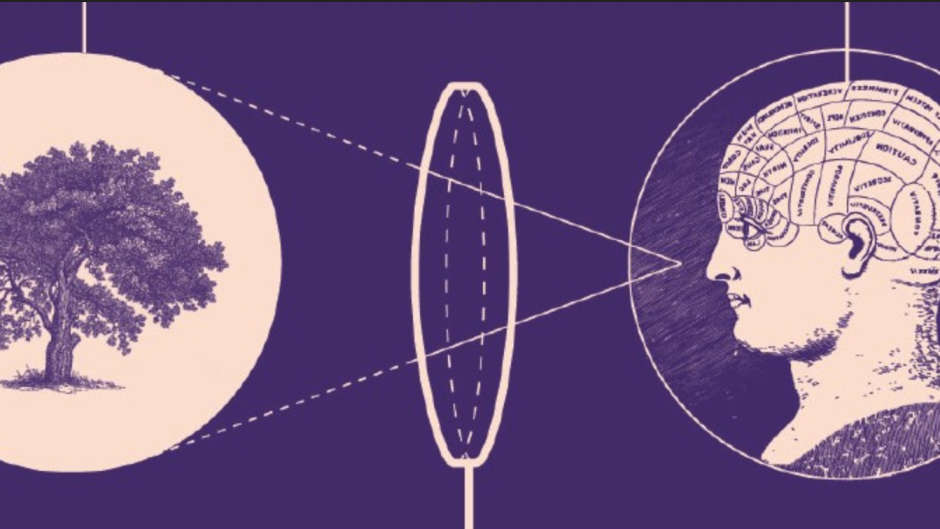

The Australian academic launches off with a clear statement – AI is neither artificial nor intelligent. She writes (p.8); ‘rather, artificial intelligence is both embodied and material, made from natural resources, fuel, human labour, infrastructures, logistics, histories, and classifications.

AI systems are not autonomous, rational, or able to discern anything without extensive, computationally intensive training with large datasets or predefined rules and rewards. In fact, artificial intelligence as we know it depends entirely on a much wider set of political and social structures.

And due to the capital required to build AI at scale and the ways of seeing that it optimizes AI systems are ultimately designed to serve existing dominant interests. In this sense, artificial intelligence is a registry of power’.

A copy of the book. / Katecrawford.net Introduction

Crawford tackles this challenging subject through a three-pronged approach: power, politics, and the planet.

She writes;

“[Every way of] defining artificial intelligence is doing work, setting a frame for how it will be understood, measured, valued, and governed. If AI is defined by consumer brands for corporate infrastructure, then marketing and advertising have predetermined the horizon. If AI systems are seen as more reliable or rational than any human expert, able to take the “best possible action,” then it suggests that they should be trusted to make high-stakes decisions in health, education, and criminal justice. When specific algorithmic techniques are the sole focus, it suggests that only continual technical progress matters, with no consideration of the computational cost of those approaches & their far-reaching impacts on a planet under strain.”

Crawford continues, setting out her argument: “We need a theory of AI that accounts for the states and corporations that drive and dominate it, the extractive mining that leaves an imprint on the planet, the mass capture of data, and the profoundly unequal and increasingly exploitative labour practices that sustain it.”

Her theory of extraction goes beyond the normal nomenclature we use for AI. AI systems are typically extractive or generative – the former extracting interesting information or data, often from very large data sets, and the latter generating original content based on input data.

This technology can create music, art, images, essays, news reports, such as ChatGPT. Her theory of extraction is based on the extractive processes used for raw material mining, the extraction and capture of mass data sets, and the extraction of human labour from increasingly bonded and servile roles.

Corporate power and the military-technological complex

When we look at the energy and momentum around AI Technology at the moment, we see many of the dynamics of historical gold rushes: chasing rumours, announcements of ‘great finds’, almost frenzied excitement surrounding the latest ‘unicorn’.

Having pondered that image a little longer – we wonder if the hype surrounding the emergence of the railroads is not closer to the mark. AI is offering fundamental structural change in society.

It isn’t just about another small number of people becoming very, very rich – though that seems to be part of it. Kate Crawford describes AI as being all about technology, capital (money), and power.

For those reason’s alone it is important that governments – democratic or not – have a growing interest in everything pertaining to these technologies.

Kate Crawford describes the slightly troubled dynamic of co-operation between private business and government. This collaboration has often, but not always been willingly offered.

One of the strengths of this book is the rich and well-chosen selection of examples. She starts with the usual defence and security service examples.

She asks (and answers) why do the military and security services want to collaborate with academia and private companies in particular? She cites the following reasons driving that dynamic:

-

Private companies often seem to manage technology breakthrough more rapidly that state or academic research institutes: money is a potent motivator.

-

By outsourcing elements of the surveillance activities, public bodies are able to get around constitutional, legal and ethical controls.

-

Governments want access to the levers of power that advanced technologies offer to help manage, steer, influence, and sometimes control society.

However, when these collaborations are brought into the public eye – be it by Edward Snowdon, or something like Project Maven (a collaboration between Google and the DoD looking to get innovation battle-field ready far earlier than has historically been the case), these have caused significant embarrassment – both for the democratic governments who are responsible for the security services and the private companies who want to be perceived as the ‘good guys’ – by customers, shareholders, employees, and the public at large.

Crawford builds on the argument made by Shoshana Zuboff concerning asymmetry of power (The Age of Surveillance Capitalism).

In Zuboff’s case the focus was primarily on private companies.

Kate Crawford adds to that imbalance highlighting that these companies are extending their surveillance activities, while multiplying their personalised datasets with the blessing of State authorities, often with scant regard for potential adverse consequences, invasion of privacy, or other harms.

The author argues that far from acting as a force for democratisation; these advanced technologies are amplifying established societal imbalanced and generating new ones.

It is a forced engagement with the machines since workers have a stark choice; you either adapt or get out.

Crawford argues that too much of the discussion around the impacts of these new technologies are framed in one of two extreme perspectives, which she labels:

-

Techno-utopianism – where technology is the panacea for all known problems: disease, famine, the environment, even crime; or,

-

Techno-dystopianism – where all roads lead to inevitable super-intelligence and likely destruction or subjugation of humanity.

It is very difficult to have thoughtful debate where perspectives have become so polarized.

Human costs of extraction

Crawford moves on from corporate power and military partnerships, to consider labour and the human costs of extraction. Extractive mining has a long history of abuse of human miners, and sadly extractive AI continues this tradition.

Amazon fulfilment centres are a case in point. Crawford studies these carefully and relates the demands of efficiency burdening each labourer.

Every second of work is monitored. Workers are allowed off-task for a total of 15 minutes in an 8h to 9h shift.

It is essential for every worker to achieve the same “picking rate” and workers can be disciplined and then fired if they fail to maintain the picking rate.

Minor injuries are common, and part of the burden of fast, efficient packaging in such centres.

In these environments, humans are there to complete the fiddly tasks that robots cannot do. Alternatively, we can interpret this as the human workers having to adapt to the deficiencies of the robots.

In these centres, humans are increasingly treated as though they were robots and corporate management is not shy about the ultimate goal: to dispense with humans altogether.

In Amazon fulfilment centres, the bodies of workers run to a computational logic. The trifecta of this logic is efficiency, surveillance, and automation. It is essential for the managers to extract the maximum of economic value from the body of each worker.

Therefore, efficiency demands place an increased emphasis on conformity, standardisation, and interoperability.

These processes and tendencies started long before AI, in the development of the production line in Ford factories for example, with Taylorist management practise and time and motion studies. Extractive AI is a continuation of the same processes, which appear fundamental to maximising efficiency and output.

Extraction is often presented as a ‘beneficial human-AI collaboration’ – but in reality, the collaboration is not fairly negotiated. It is a forced engagement with the machines since workers have a stark choice; you either adapt or get out.

Mechanical Turk is another example Crawford gives of human fuelled automation. Workers are poorly paid, far below minimum wage, and may only make a few USD per hour of work.

This type of work is emblematic of the race to the bottom, although the majority of workers are often highly educated.

The darker side of this extractive logic is often hidden from view, and carefully concealed by the large tech corporations.

Crawford gives the example of human content moderators for social media, YouTube, Facebook etc., who have to monitor and manage content of a distressing or explicit nature online; beheadings, torture, rape, self-harm, even suicide.

It has been documented that high levels of mental health issues, including PTSD, are found amongst content moderators as a result of such taxing work.

The true human labour costs and impacts of extractive AI are constantly down-played and underestimated. The tech companies themselves have a vested interest to ensure this is the case.

Yet in public society, more broadly, there is a lack of awareness and consciousness of the behind-the-scenes reality of these impacts. Crawford’s book provides a chilling account of the hidden human costs of extractive AI.

The organisation and unionisation of tech workers provides one route for improving working conditions, but there seems to be a lack of other workable positive solutions which might minimise the harms.

Planetary extraction

Launching into the third theme, Crawford gives the case study of the Amazon Echo, or Echo Dot, where the Amazon digital assistant Alexa can help answer questions and respond to commands – turn on the lights, etc.

“Alexa is training to hear better, to interpret more precisely, to trigger actions that map to the user’s commands more accurately, and to build a more complete model of their preferences, habits and desires. What is required to make this possible? Put simply: each small moment of convenience – be it answering a question, turning on a light, or playing a song – requires a vast planetary network, fuelled by the extraction of non-renewable materials, labour, and data”

Take lithium for instance. ’ Smartphone batteries, for example, usually have less than eight grams of this material, yet it is critical to our battery function & health, albeit in a device with a short lifespan.

Amnesty International have investigated cobalt, another key precious metal for battery production. In the Congo, workers are paid the around 1 US dollar per day for working in conditions hazardous to life and health.

Amnesty has documented children as young as 7 working in the mines for 1 USD per day. In contrast, Amazon CEO Jeff Bezos, made an average of $275 million a day pre-pandemic, according to the Bloomberg Billionaires Index.

A child working in a mine in the Congo would need more than 700,000 years of non-stop work to earn the same amount as a single day of Bezos’ income.

Mark Graham in Oxford – at the OII – helps clarify the opaqueness we perceive: “contemporary capitalism conceals the histories and geographies of most commodities from consumers.

Consumers are usually only able to see commodities in the here and now of time and space, and rarely have any opportunities to gaze backwards through the chains of production in order to gain knowledge about the sites of production, transformation, and distribution”.

Data extraction

Finally, Crawford also writes on the vast apparatus needed to organise, capture, and categorise data.

She also calls this a logic of extraction, basing her argument on the need for large corporations to harvest as much data as possible in order to gain competitive advantage.

As an exemplar, ChatGPT has been trained on 300bn words and has around 175bn parameters – despite the fact the English language only has around 60,000 active words today. This gives us a sense of scale and scope and size of generative AI operations.

This argument now has significant overlaps with Shoshana Zuboff’s work entitled “The Age of Surveillance Capitalism” – which we have discussed and reviewed in the past (see here).

As we stated before – we know, large-scale AI systems consume enormous amounts of energy. Yet the material details of those costs remain vague in the social imagination.

It remains difficult to get precise details about the amount of energy consumed by cloud computing services.

Crawford writes about the “availability of open-source tools…in combination with rentable computation power through cloud superpowers such as Amazon (AWS), Microsoft (Azure), or Google Cloud is giving rise to a false idea of the ‘democratization’ of AI.”

Yet she continues:

“while ‘off the shelf’ machine learning tools, like TensorFlow, are becoming more accessible from the point of view of setting up your own system, the underlying logics of those systems, and the datasets for training them are accessible to and controlled by very few entities. In the dynamic of dataset collection through platforms like Facebook, users are feeding and training the neural networks with behavioural data, voice, tagged pictures and videos or medical data. In an era of extractivism, the real value of that data is controlled and exploited by the very few at the top of the pyramid”.

The new infinite horizon is data extraction, machine learning, and reorganizing information through artificial intelligence systems of combined human and machinic processing.

The territories are dominated by a few global mega-companies, which are creating new infrastructures and mechanisms for the accumulation of capital and exploitation of human and planetary resources.

Crawford writes:

“The process of quantification is reaching into the human affective, cognitive, and physical worlds. Training sets exist for emotion detection, for family resemblance, for tracking an individual as they age, and for human actions like sitting down, waving, raising a glass, or crying. Every form of biodata – including forensic, biometric, sociometric, and psychometric – are being captured and logged into databases for AI training.”

Conclusion

Crawford is clearly both an academic and an activist – as such, the book is a mix of both clear and reasoned argumentation on AI (though with little detailed insight into ML operations), and also anecdotal and activist knowledge on labour practices, front-line experiences, institutional change, government (ir)responsibility, business lobbying, and political shifts.

Much like the Shoshana Zuboff book (see here) this book provides a very valuable and insightful description of the problems – but the two books also illustrate how much of these problems are rooted in extraordinarily powerful and well-defended western capitalist systems.

Jonathan Ebsworth, Technology management consultant, co-founder of TechHuman.

John Wyatt is Emeritus Professor of Neonatal Paediatrics, Ethics & Perinatology at University College London, and a senior researcher at the Faraday Institute for Science and Religion, Cambridge.

Samuel Johns is a writer and a social entrepreneur, working in both western Europe and Asia (Nepal).

This article was first published on TechHuman.org and has been re-published with permission.

Published in: Evangelical Focus - TechHuman - ‘Atlas of AI’ by Kate Crawford: a review