Eastern European trolls controlled large US Christian Facebook pages

According to a report, Facebook “has given the largest voice in the Christian American community to a handful of bad actors, who have never been to church”.

MIT, Relevant · CALIFORNIA · 07 OCTOBER 2021 · 13:20 CET

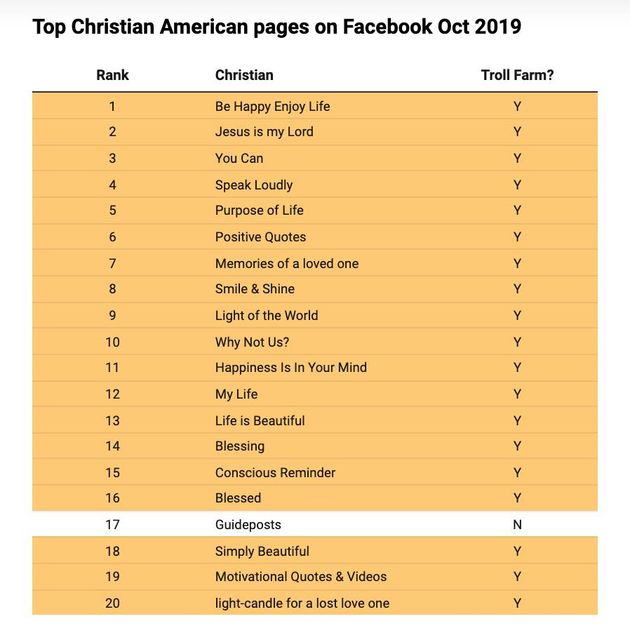

Nineteen of Facebook top 20 pages for American Christians were managed by Eastern European troll farms, and internal report obtained by MIT Technology Review from a former Facebook employee, reveals.

The troll farms —professionalized groups that work coordinated to post provocative content, often propaganda, to social media— are largely based in Kosovo and Macedonia, and were reaching 140 million US users per month, 75% of whom had never followed any of the pages.

The report points out that “collectively, their Christian Facebook pages reach about 75 million users a month, an audience 20 times the size of the next largest Christian Facebook page”.

“Instead of users choosing to receive content from these actors, it is our platform that is choosing to give [these troll farms] an enormous reach”, wrote the report’s author, Jeff Allen, a former senior-level data scientist at Facebook.

“Give the largest voice in the Christian American community to bad actors”

According to Allen, Facebook “has given the largest voice in the Christian American community to a handful of bad actors, who, based on their media production practices, have never been to church”.

“This is not normal. This is not healthy. We have empowered inauthentic actors to accumulate huge followings for largely unknown purposes”, he added.

The Facebook report, which was written in the lead up to the 2020 U.S. presidential election, also found that those troll farms were reaching the same demographic groups singled out by the Kremlin-backed Internet Research Agency (IRA) during the 2016 election, which had targeted Christians, Black Americans, and Native Americans.

In 2018, a BuzzFeed News investigation already revealed that at least one member of the Russian IRA, indicted for alleged interference in the 2016 US election, had also visited Macedonia around the emergence of its first troll farms, though it didn’t find concrete evidence of a connection.

Five troll-farm pages remain active

Joe Osborne, a Facebook spokesperson, said in a statement that the company “had already been investigating these topics; we stood up teams, developed new policies, and collaborated with industry peers to address these networks”, at the time of Allen’s report.

“We have taken aggressive enforcement actions against these kinds of foreign and domestic inauthentic groups and have shared the results publicly on a quarterly basis”, stressed Osborne.

However, in the process of fact-checking this story shortly before publication, MIT Technology Review found that “five of the troll-farm pages mentioned in the report remained active”.

Possible solution

Although Facebook was aware of the troll farms and their manipulation in 2016, they did little to address the issue, even when the 2020 report also suggested a possible solution, stating that “this is far from the first time humanity has fought bad actors in our media ecosystems”.

Allen pointed to Google’s use of what’s known as a graph-based authority measure, which assesses the quality of a web page according to how often it cites and is cited by other quality web pages, to demote bad actors in its search rankings.

“We have our own implementation of a graph-based authority measure. If the platform gave more consideration to this existing metric in ranking pages, it could help flip the disturbing trend in which pages reach the widest audiences”, concluded the former Facebook senior-level data scientist.

Published in: Evangelical Focus - life & tech - Eastern European trolls controlled large US Christian Facebook pages